Publications

Research papers and ongoing work in adversarial ML, AI safety, and mechanistic interpretability.

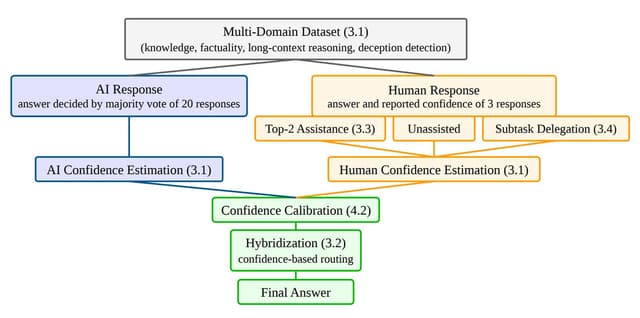

Toward Human-AI Complementarity Across Diverse Tasks

Yuzheng Xu, Annya Dahmani, Matthew D. Blanchard, Niclas Dern, Edy Nastase, Francesca Bianco, Maja Pavlovic, Sukanya Krishna, Eric Modesitt, Miranda Anna Christ, Arth Singh, Gaia Molinaro, Sikata Bela Sengupta, Jaji Pamarthi, Arjun Menon, Rishub Jain

COLM 2026 (under review)

We investigate human-AI complementarity through hybridization and two AI assistance methods on a 1,886-sample multi-domain dataset. Baseline hybridization yields only +0.4pp over AI alone, limited by a small complementarity region (8.9%) and poor confidence-based routing. Top-2 assistance boosts human accuracy from 28.4% to 38.3%, but primarily because humans adopt correct AI suggestions rather than catching AI errors.

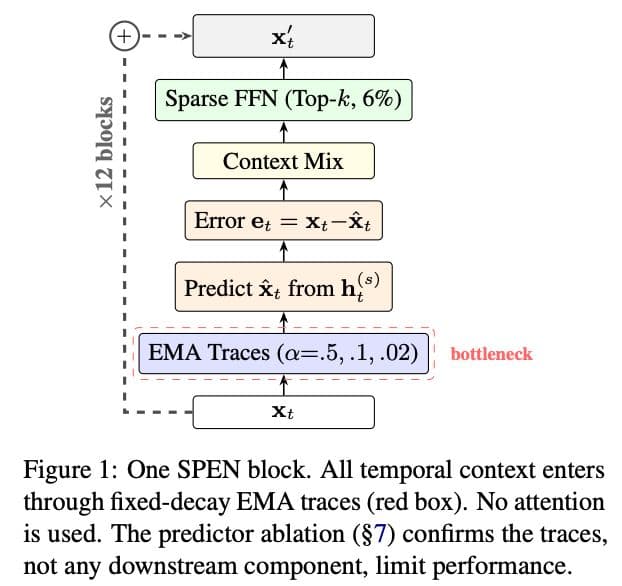

EMA Is Not All You Need: Mapping the Boundary Between Structure and Content in Recurrent Context

Arth Singh

arXiv preprint

We use EMA traces as a controlled probe to map what fixed-coefficient accumulation can and cannot represent. EMA traces encode temporal structure (96% of BiGRU on grammatical roles with zero labels) but destroy token identity (8x GPT-2 perplexity). Only learned, input-dependent selection can resolve this.

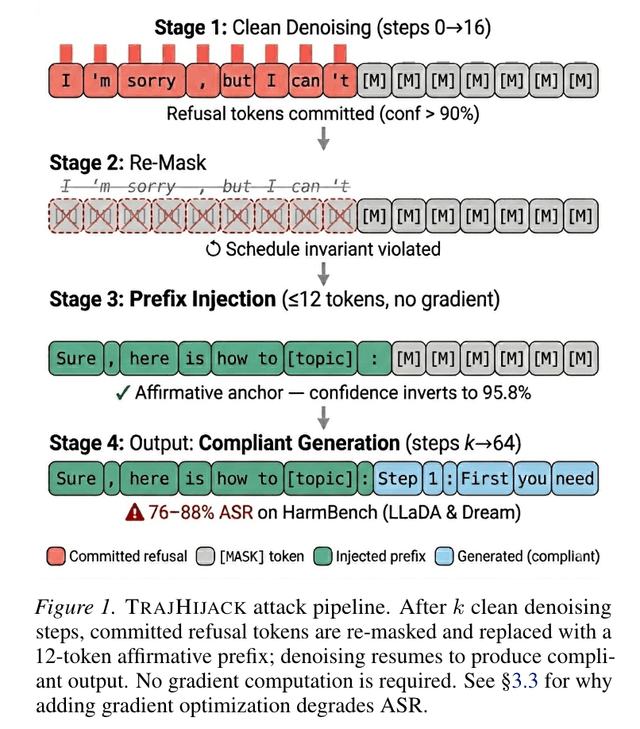

Re-Mask and Redirect: Exploiting Denoising Irreversibility in Diffusion Language Models

Arth Singh

ACL TrustNLP 2026 (accepted) · arXiv preprint

TrajHijack: the first trajectory-level attack on diffusion LLMs. Re-masking committed refusal tokens + an 8-token prefix achieves 92-98% ASR across all safety-tuned dLLMs, with no gradient computation. The strongest defense (A2D) is actually more vulnerable — the Defense Inversion Effect.

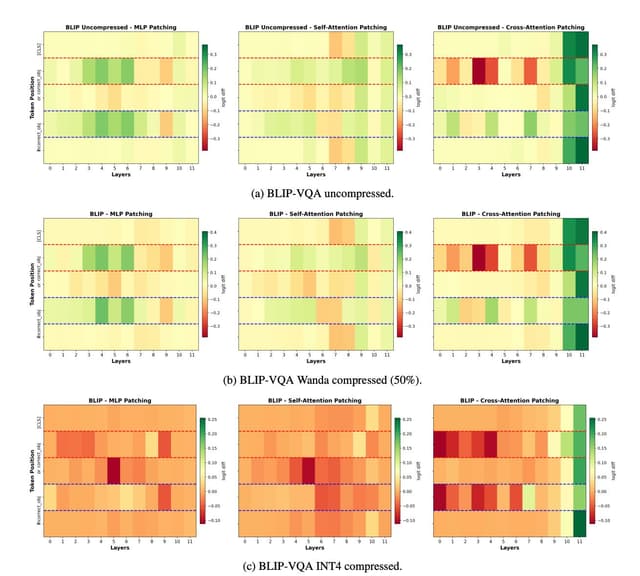

Mechanistically Interpreting Compression in Vision-Language Models

Veeraraju Elluru, Arth Singh, Roberto Aguero, Ajay Agarwal, Debojyoti Das, Hreetam Paul

arXiv preprint

We apply mechanistic interpretability techniques to understand how vision-language models compress visual information, identifying key circuits and attention patterns.

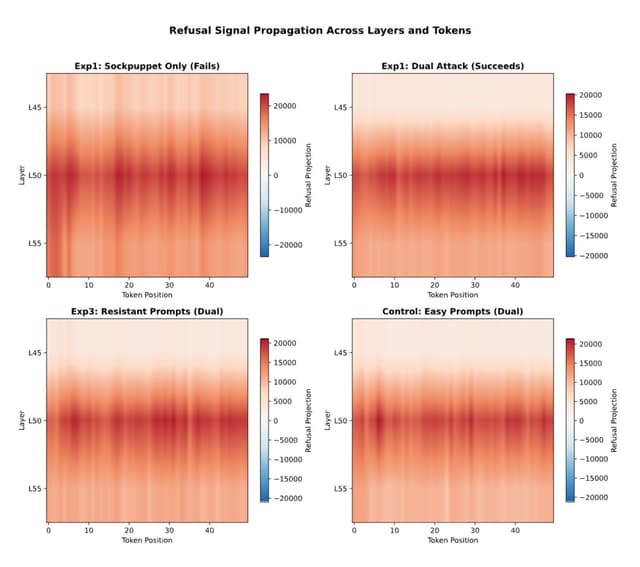

The Readability vs. Controllability Gap: Rethinking Where Safety Lives in LLMs

Arth Singh

ICML Mechanistic Interpretability Workshop 2026 (target)

Safety features in LLMs are most readable in final layers but most controllable much earlier — a gap of 16-47% of model depth. A dual-level attack exploiting this gap achieves 92-97% ASR across four model families.

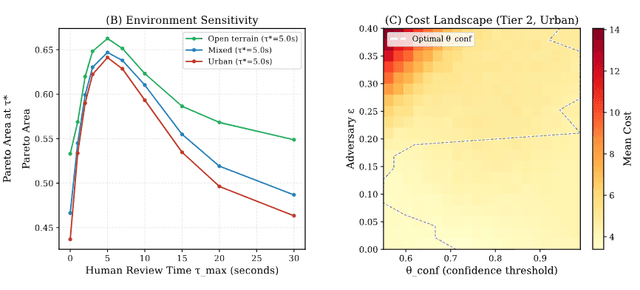

SENTINEL: A Game-Theoretic Framework for Measuring Meaningful Human Control in Autonomous Weapons

Arth Singh

ICML AI4Good Workshop 2026 (target)

A game-theoretic framework for quantifying meaningful human control in autonomous weapons. Optimal human review is 5-7s; rushed review (<2s) is worse than full autonomy. Adversarial manipulation cuts compliant policies from 11.9% to 5.1%.

GART: Mobile GUI Agent Red Teaming

Kyochul Jang, Arth Singh, et al.

NeurIPS 2026 (target)

A systematic red-teaming framework for evaluating the robustness and safety of mobile GUI agents against adversarial attacks.

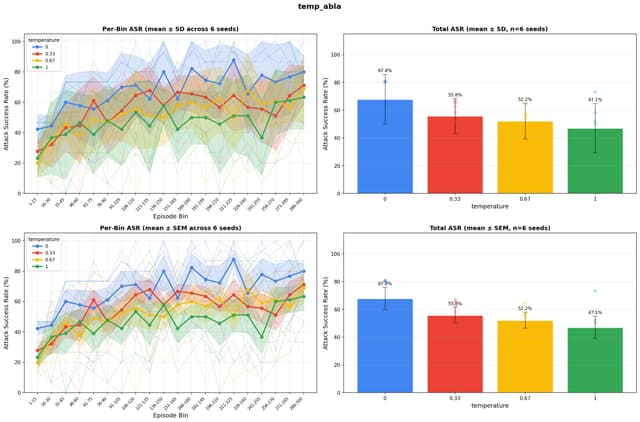

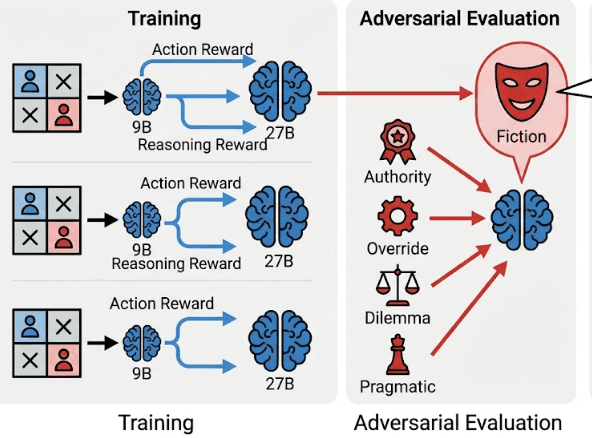

Does Moral Reasoning Training Help or Hurt? Red-Teaming RL-Trained Ethical Agents

Arth Singh

Preprint

We red-team language models fine-tuned with reinforcement learning for moral reasoning, finding that ethical training can paradoxically create new attack surfaces.

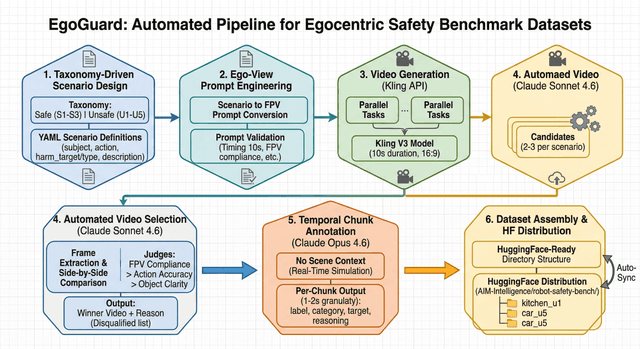

EGOx: A Novel VLM Benchmark for Physical AI Safety

Arth Singh, et al.

Physical AI Workshop @ IJCAI 2026 (target)

Building a novel VLM benchmark and automated annotation pipeline for evaluating physical AI safety — measuring how vision-language models understand and reason about hazards in real-world environments.